Maintaining data hygiene is how businesses avoid the ‘garbage in garbage out’ trap in data use and analytics. It is an ongoing process of deduplicating records, standardizing schemas, formatting and purging data decay. Without data hygiene it is hard to trust business data for high-stakes decisions.

Contents

Managing millions of records in your business listing database is a challenge and complicated process requiring various data hygiene tools, techniques and expertise. The records need constant validation, verification so that the data remains accurate and usable. We did that for a leading data aggregator serving hospitality industry where we cleaned and enriched over 17 million records every year. The contact details either had a wrong phone number, duplicate entry or defunct email. There are multiple studies that found opportunities lost due to inaccurate insights from poor data quality can cost organizations 15–25% of revenue.

Business listing database powers travel portals, lead generation platforms, directories, marketplaces and many such revenue generating portals. But the data being dynamic changes all the time. The phone numbers change, emails get outdated making the data unusable. Data cleansing services ensure business listing databases remain accurate, reliable, and continuously updated. Read on to get insights on data hygiene best practices you must follow to keep your data accurate and updated all the time.

What is data hygiene for a business listing database

Data hygiene is a process that ensures that your business data stays clean and updated for improved decision making, high productivity and brand protection. The process involves checking business records for accuracy and removing any kind of inaccuracy be it misspelling, duplicates, outdated or incomplete data or even correcting improper formatting.

Data hygiene for business listings focusses on external business entities with a primary goal to find accurate contact information and business profiles. It is a good idea to stay alert and look out for signs that indicates your business needs deep data cleaning.

And yes, the responsibility of supplying clean data is with the data aggregator and not the end-user. As an business looking for clean data it is always a good idea to partner with data cleansing companies.

Before we further discuss data hygiene best practices let us just have a quick glance at what a clean business listing record must look like.

A clean business data must have:

- Valid Data

- Clean Records

- Updated Records

- Rich Business Attributes

- Standardized Data Format

- Non-Duplicate Records

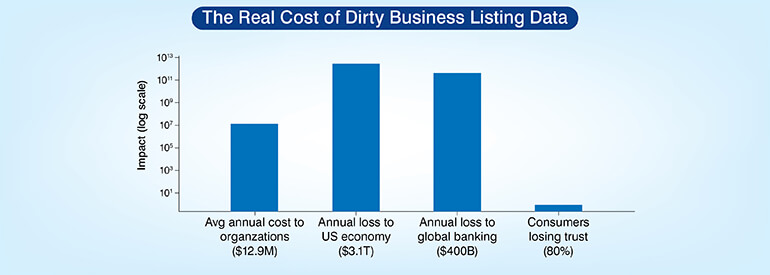

What dirty business listing data really costs you

Dirty data is not just a data management issue; it has direct impact on your revenue and financials.

Keep your business listing database clean, accurate, and reliable

Connect Today »8 data hygiene best practices for up-to-date business listing databases

Business records are dynamic, they keep changing due to leadership changes, relocations, numbers getting changed or emails getting closed. There could be multiple reasons and that is the reason the business listing databases get quickly unusable requiring maintenance. It requires constant monitoring. However, there are 8 best data hygiene practices, if followed helps you with the basic data hygiene and builds foundation for accurate data all the time.

Audit your existing database before cleaning anything

This is the first thing to do before you explore data hygiene solutions for your business listings. You need to do a complete, planned and structured audit to evaluate the current data. Your data audit should look for duplicates, outdated data, missing data and inconsistencies and any issues found must be noted before you implement any data hygiene workflows.

This is a very important step because unless you are clear about your requirements you may end up wasting effort and resources. Only a clear understanding of your data hygiene can help you plan data hygiene processes that can directly tackle your data issues.

A measurable way to assess your database health is done through data quality score. You can assess the percentage of records that meet the clean criteria, add a score and use it as a benchmark for further improvement. You can give scores to non-duplicate records, valid contact information, consistent formatting, verified business existence and any other parameter essential for your data hygiene needs.

You can always use data profiling tools such as Talend data quality, Informatica data quality and any other tool suitable for you. These tools can automatically analyze datasets to detect anomalies like missing fields, duplicate records etc.

Once your audit is done, you are clear about the database issues and accordingly you can prioritize and implement data hygiene processes. Data audits also help you identify regulatory compliances so that you don’t miss any compliance.

Set data quality KPIs for every field in your listing database

For healthy business listing databases, every field such as name, address, phone numbers, emails should meet a defined accuracy and completeness. But how do you do that? You need to set a data quality KPIs for each field.

Setting a benchmark helps identify the level of data error and how it needs to be treated. It also helps identify the level of error that is acceptable in your organization or project and what error is just not accepted. Like for a sales team an incorrect phone number is just not acceptable while a missing secondary attribute may not impact much.

Define KPIs for each field in your listing database based on project requirements and evaluate them using specific data quality indicators. Though there are no universal accepted benchmark ranges for fields but one can follow certain acceptable thresholds.

| KPI | Definition | Accepted Threshold |

|---|---|---|

| Accuracy Rate | % of records with verified, correct field values | ≥ 95% |

| Completeness Rate | % of records with all mandatory fields populated | ≥ 90% |

| Duplication Rate | % of records that are duplicates | ≤ 2% |

| Freshness Score | % of records validated within 90 days | ≥ 85% |

There are many data quality and profiling tools available that you can use to track field-level KPIs. Tools such as data quality monitoring platforms, data profiling tools and data governance dashboards help detect missing values, formatting errors, duplicate records or incorrect contact details. You must track KPIs continuously through automated dashboards and whenever the metrics fall below threshold, initiate corrective actions.

Eliminate duplicates and outdated records from your business listings

Talk to Experts »Standardize contact data at the point of entry

When you check the mistake at the entry point it gets simpler to manage the data as it prevents dirty data from spreading across the database. You have a practice of implementing consistent formats and naming conventions at the entry point itself.

This practice is important as it prevents dirty data from spreading across the database and helps maintain a cleaner database. It is always a good practice to manage issues at the entry level itself rather than fixing them later and it also proves cost-effective. Fixing a error at the entry point costs much less than correcting the same record after it reaches the production system. This is especially useful when performing large-scale data cleansing.

Implementing entry-point standardization needs clear rules for validation and normalization. Create a Standard Operating Procedure (SOP) for data entry where you know exactly the rules to follow while capturing data and formatting before it enters the database.

Some examples to explain this clearly would be standardizing phone number formats, uniform address formats, standardized salutations, consistent capitalization and naming conventions and other similar rules as per your project requirement.

Implementing automated validation at the entry point checks that submitted data follows the required structure before being stored in the system.

Run real-time validation to catch errors before they compound

Implementing real time validation where the data is checked constantly as soon as it entered or submitted using predefined rules ensures no incorrect data enters your database. This ensures that not a single incorrect record gets stored in your database.

This practice helps organizations from preventing errors from compounding and maintain higher data accuracy across their listing databases. Because any bad data inside the CRM can replicate across systems and marketing tools making corrections very difficult at a later stage.

For this you can use rule-based validation engines, data profiling tools, external verification APIs and deduplication algorithms. All these tools ensure that any inaccuracy is detected and corrected before they enter the database.

Different techniques can be used to check the records like through cross reference validation you can verify incoming records against trusted external data sources. It could be used to validate business address using APIs or comparing company addresses with business directories. This ensures the business exists and the address information is legitimate.

Data type validation ensures that the values entered into each field match the expected format like the phone number field has only numeric values. This prevents incorrect data from being stored in the database. Similarly, range checking ensures that numeric or structured values fall within logical boundaries.

You can also customize validation logic that can capture more subtle data inconsistencies that simple validation rules may miss.

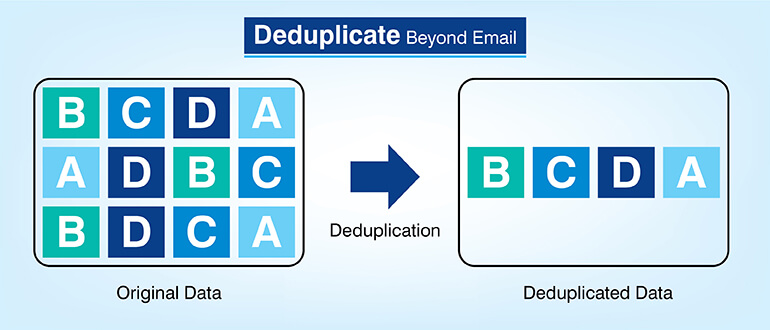

Deduplicate records using multi-key matching, not just email

Here duplicate business listings are identified by comparing several fields together such as name, address, contact details etc. Multi-key matching improves duplicate detection by evaluating several attributes at the same time. Relying on a single identifier like email may not give accurate results.

This is very important for business databases as records often originate from multiple sources and relying on a single field like email for deduplication may turn ineffective. Studies have shown that duplicate data can cost businesses up to 20% of their annual revenue. And many duplicate records may go undetected if it relies on email only.

Multi-key matching compares several attributes simultaneously and detects if any 2 records reflect the same entity. Fuzzy matching is a good technique that is used to identify duplicate records with slight variations. This technique helps detect duplicates caused by spelling variations, abbreviations or inconsistent formatting.

Then we have deterministic and probabilistic matching. Deterministic is a simple method that uses exact rules to identify duplicates. This method may miss duplicates when data variation exists. On the other hand, probabilistic matching detects near matches that deterministic rules would miss. Probabilistic matching assigns confidence scores based on similarities across multiple fields.

Once duplicates are identified organizations need to decide the data that needs to be retained while merging records. The goal is to maintain one authoritative version of each business listing.

Transform your business listing database into a trusted data asset

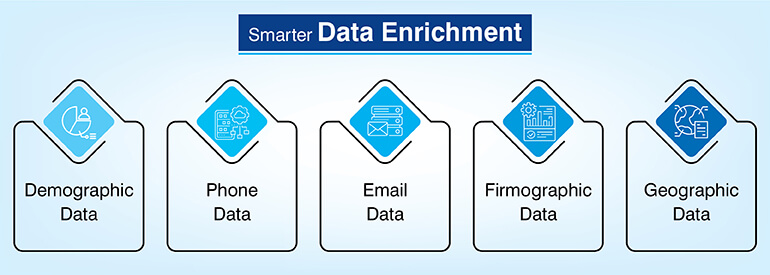

Get Started »Enrich and append records with firmographic and contact data

This process involves adding more data to the existing records to make the basic entry completer and more useful. The additional information captured from external sources is validated and verified before adding it to the database. Additional information like infographic, firmographic, revenue range, etc. transforms basic contact details into actionable business intelligence.

Enrichment is important because additional data fills gaps that help in better understanding of organizations that again support better targeting and sales pitches. Enriched information adds more context and deeper understanding that further improves segmentation and targeting.

Some of the fields that can be added include location, revenue, technology stack information and sometimes even social media activities. All this information becomes a boon for marketing and sales teams.

The attributes need to be updated at regular frequency so that they stay updated all the time. Sources could be primary, secondary or third party.

Enriched customer profiles with appropriate segmentation helped the video communication company market their services effectively along with targeted upsell and cross sell, driving better customer retention and improved sales.

Automate data hygiene using rule-based and AI-assisted tools

Often certain data errors are complex and are missed by traditional rule-based validation and that is where AI-assisted data hygiene tools are needed. They can easily handle large-scale datasets and improve duplicate detection accuracy. AI-tools keep learning from historical data corrections and hence perform better, allowing organizations to maintain clean databases without relying entirely on manual processes.

Some of the AI- assisted technologies used in data hygiene include machine learning based entity resolution that identifies duplicate records more accurately. AI-powered anomaly detection identifies unusual patterns that may indicate incorrect data. NLP techniques can be used to analyze unstructured text fields and standardize them.

Automation and AI-assisted tools significantly improve the efficiency of maintaining clean datasets but since the business data is dynamic, monitoring is very important. Also, certain tasks still need human intervention, and human specialists review flagged records to ensure accurate decisions.

Build a continuous data decay prevention system – not a one-time fix

Data cleansing is not a one-time process because data decays at a very fast rate. You records need constant validation, enrichment, and re-deduplication to ensure it stays accurate and updated all the time. The process should be embedded in your workflow.

Even if your data is clean but still it will decay over a time as companies relocate, phone numbers change, emails get obsolete, mergers happen and companies shut down. All these issues make data outdated and unreliable.

Although there is no fixed schedule for validating and updating business records, the best industry practices recommend refreshing different data fields at intervals based on how frequently they change.

| Field | Recommended Re-validation Frequency |

|---|---|

| Recommended Re-validation Frequency | Every 60 days |

| Phone numbers | Every 90 days |

| Job titles / decision-makers | Every 6 months |

| Business addresses | Every 6 months |

| Company firmographics | Annually |

Apart from the scheduled validation timelines, Change Data Capture (CDC) mechanisms can also be used to monitor source systems. CDC helps detect any change related to company relocation, leadership updates etc. The systems keep monitoring the source and automatically trigger record updates when changes occur. This allows databases to remain near-real-time representations of business information.

Apart from CDC, organizations need to monitor any changes that require immediate updates outside the regular refresh schedule. Regular data maintenance is also required for regulatory compliance. GDPR also requires that organizations must ensure that personal data remains accurate, up to date, and removable upon request.

When to outsource business listing database management

Database management is a complex process especially when the dataset grows. Managing it in-house can be challenging. Often database scale exceeds internal validation capacity, client churn increases despite internal data hygiene efforts, or you are unable to meet compliance requirements. In such a scenario outsourcing should be considered.

Choosing an outsourcing partner requires evaluating specific operational capabilities. The partner should have the required skills, and security certifications. And most important should have reputation in the market. They should offer dedicated data quality dashboards providing visibility into the validation progress. And lastly, they should have trained data specialists who can manually review edge cases.

Conclusion

Maintaining clean data is important for business growth and it requires constant monitoring. It is not a one-time process. Implementing structured validation cycles help prevent database decay. As data volumes grow organizations need to move beyond scheduled validation. Strong data hygiene best practices must be followed to reduce errors and improve operational efficiency. Organizations that invest in proactive data hygiene frameworks make faster, more confident business decisions.

Frequently asked questions about business listing data hygiene

A full database audit must be done every quarter to identify the data hygiene of your database. After this, do a rolling field level validation every 60-90 days. Fields that change more frequently like phone numbers and emails should be checked more often. Slower changing fields can follow a longer validation cycle.

Going by the industry benchmark ≤2% duplicate records is well accepted while a duplicate rate between 2-5% indicates moderate hygiene issues that must be corrected. However, duplication beyond 5% needs serious attention as they can lead to fragmented customer profiles.

Data standardization has nothing to do with data accuracy; it is all about data consistency. The formats and data structure must be uniform and consistent. Examples would be a uniform phone number format or standardized company naming conventions. Data validation on the other hand verifies data accuracy. Data validation compares records against trusted external reference sources.

Data aggregators maintain accurate and up-to-date records, aligning with GDPR’s data accuracy principle. The data hygiene workflows include audit trails that track updates, corrections and deletions.

Data decay happens very fast in business listing databases. Some of the reasons include company relocation and restructuring, mergers and acquisitions, phone number reassignment or deactivation, business relocations, closures or rebranding.

In data hygiene terms, the single authoritative version of an entity within a database is called a golden record. This consolidated entry is created by merging multiple duplicate records into one. This way, only the most accurate and verified value for each field is retained by the system. Creating golden records helps remove conflicting or redundant information and build a trusted source of truth for downstream analytics and operations.

Even though AI excels at detecting patterns, anomalies, and duplicate records at scale and machine learning models can automate classification, matching, and anomaly detection, human review is still necessary for low confidence matches and ambiguous cases. You also need manual oversight for final quality check in compliance decisions and contextual interpretation. Practical approaches combine AI-driven automation with human quality control for safety and efficiency.

Ensure standardized, and continuously updated business listing data

Contact Us »

Snehal Joshi , Head of Business Process Management at HabileData, leads a 500-member team of data professionals, having successfully delivered 500+ projects across B2B data aggregation, real estate, ecommerce, and manufacturing. His expertise spans data hygiene strategy, workflow automation, database management, and process optimization - making him a trusted voice on data quality and operational excellence for enterprises worldwide. 🔗Connect with Snehal on LinkedIn